Acumatica 2026 R1: Latest Acumatica AI-Enabled Product Release Delivers Intelligence that Works for You

All Blogs

Today’s service-focused businesses need an all-in-one, AI-powered professional services solution to manage...

Many of today’s nonprofits operate on legacy software solutions and multiple applications that take their ...

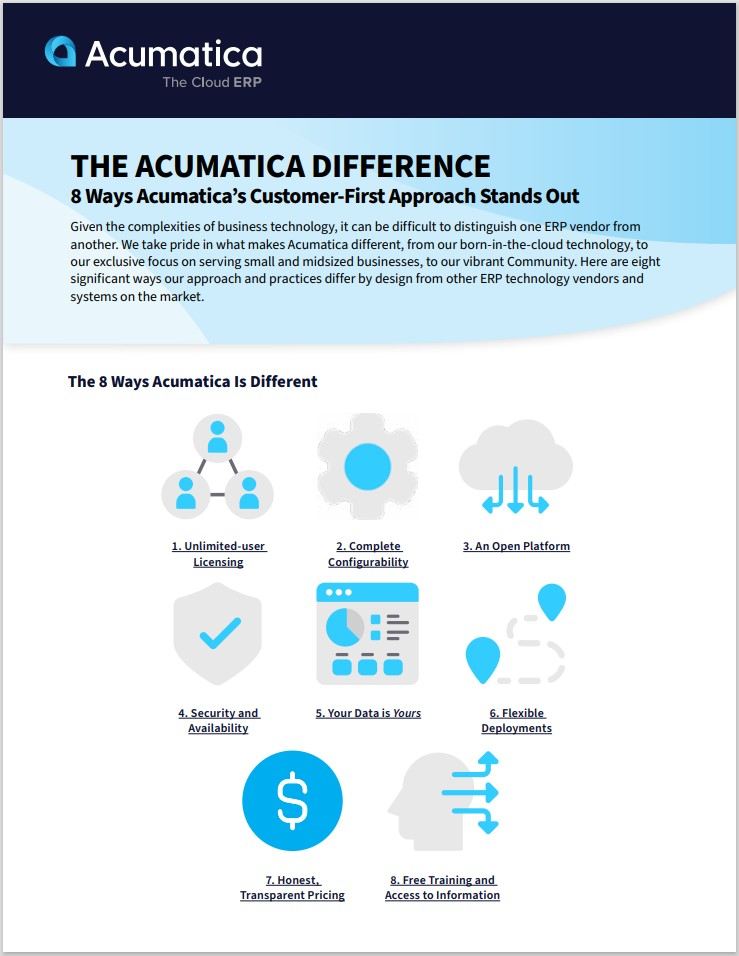

Explore how Acumatica’s customer-first approach, innovative technology, and flexible solutions redefine ERP for small and midsized businesses.

In today’s fast-moving, digital world, incorporating AI into your business has moved from a future endeavo...

In Episode 8 of The Acumatica ERP Podcast, Acumatica customer Smith & Long’s Patryk Kubiszyn explains how ...

As a business leader, do you need to understand—and use—the debt-to-equity ratio? The answer is “yes.” And...

Discover why upgrading from basic accounting tools to AI-powered Cloud ERP is critical for SMBs in today’s fast-paced, data-driven world.

As a company that strongly believes in the power of technology and automation, Fabuwood knew Acumatica was...

MODEX 2026 is in the books, and according to Debbie Baldwin, Director Product Management (Manufacturing), ...

What are the Oracle layoffs telling the technology community? Its priorities are shifting, from SMB needs ...

Earth Day 2026 marks the 56th year people around the world have made sustainability and environmental prog...

What is an accounting journal entry, and how does it affect your business’s success? This guide will expla...

Canada (English)

Canada (English)

Colombia

Colombia

Caribbean and Puerto Rico

Caribbean and Puerto Rico

Ecuador

Ecuador

India

India

Indonesia

Indonesia

Ireland

Ireland

Malaysia

Malaysia

Mexico

Mexico

Panama

Panama

Peru

Peru

Philippines

Philippines

Singapore

Singapore

South Africa

South Africa

Sri Lanka

Sri Lanka

Thailand

Thailand

United Kingdom

United Kingdom

United States

United States